“As we have consistently said, this rushed regulation misunderstands our platform and the way young Australians use it. Most importantly, this law will not fulfill its promise to make kids safer online, and will, in fact, make Australian kids less safe on YouTube. We’ve heard from parents and educators who share these concerns.

“Even as the ban comes into effect next week, we will continue to work with the Australian government to advocate for effective, evidence-based regulation that actually protects kids and teens, respects parental choice, and avoids unintended consequences.”

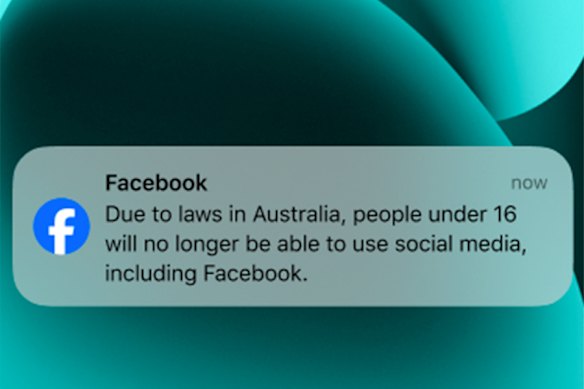

As the ban inches closer, the tech giants begrudgingly in charge of enforcing it have been drip-feeding the masses logistical information – though a lot remains unknown.

Cloak-and-dagger is not an uncommon modus operandi for social media providers, and, although eSafety has emphasised the need for transparency, the year since the world-first Online Safety Amendment (Social Media Minimum Age) Bill 2024’s passing has been no different. What will life actually look like for under-16s when they wake up on December 10?

Loading

How will age verification actually work?

Platforms are expected to take “reasonable steps” to prevent those under 16 in Australia from having accounts. Exactly what those reasonable steps are, however, have not been explicitly defined by eSafety.

At minimum, they must implement age assurance technology, but the independent regulator’s guidelines do not require a specific type or method. This is in line with the Age Assurance Technology Trial’s final report. Published in August, it also did not endorse specific methods or technologies.

There is specific guidance on what not to do. Platforms, for example, must not rely solely on users declaring their age or date of birth, a common practice on alcohol brand websites.

While the most accurate way of age assurance is verification with government identification documents, such as passports or a driver’s licence, eSafety says platforms must not rely solely on that method, and “reasonable alternative means” for age assurance must be offered to users. This is because there are, of course, concerns among users about the security of their private information, and where it will be stored.

OK, but what age verification methods will each social media platform be using?

Detail on age verification is largely limited, despite the looming ban. Snapchat – which uses every chance it can to convey its strong disagreement with its classification as an age-restricted platform – says the at least 435,000 users it has identified as under-16 will start receiving in-app, email and SMS notifications from today.

These notifications will include details about how they will be impacted by the social media ban, and information about age verification methods.

Those the platform believes to be under-16 (due to declared age or “Snapchat’s inferred age modelling signals”) are expected to verify their age through third-party providers ConnectID (secure verification through an Australian bank account) or k-ID (government-issued identification document validation, and facial age estimation via selfie).

How each platform plans to verify a user’s age

- Meta (Facebook, Instagram, Threads): The platform has not confirmed its age assurance methods, but did say those who want to appeal an under-16 age classification will have to supply a “video selfie” or supply a government-issued ID to third-party Yoti.

- TikTok: There has been no confirmation of its age assurance methods, but the video-sharing platform already implements age assurance technology for its Live function, where users must supply photos of their government-issued ID and submit three selfies from different angles.

- Snapchat: The multimedia messaging platform confirmed today it will be using third-parties Connect ID and k-ID, to verify age through a secure connection to an Australian bank account, scans of government-issued ID documents, or facial age estimation via selfies.

ESafety recommends platforms employ a “waterfall approach” to age assurance, which consists of multiple methods and technologies, starting with the least complicated, least invasive option.

That’s normally the inference method, where a user’s age is deduced with location data, such as an IP address, or behavioural data, such as the age of the account itself, or if the account engages with content targeted at children or early teens.

In the United Kingdom, since July, Pornhub has used a combination of email-based age estimation, and credit card, mobile phone or open banking verification.

Loading

Another method is age estimation facial scans, but that’s not always accurate, and has a “known source of bias” when it comes to skin tone. Predictive technologies that work for over-18s are also known to struggle with accuracy for younger faces.

Meta and TikTok pointed this masthead in the direction of their representatives’ opening statements to the Environment and Communications References Committee, delivered on October 28. Both emphasised their focus is on complying with the social media minimum age act, without providing any concrete details as to how.

We do know that TikTok already implements age assurance technology for users who want to livestream, as the requirement is they must be aged 18 or over. To confirm their age for that, users must supply photos of their government-issued ID, and submit selfies taken from three different angles to prove they’re a real person.

eSafety Commissioner Julie Inman Grant, pictured with Communications Minister Anika Wells (left) at a press conference in November, said platforms weren’t assessed based on the harms they pose to children, but whether their “sole or significant purpose” is social interaction, which is why Roblox, for example, isn’t included despite safety concerns.Credit: Alex Ellinghausen

A spokesperson has said the platform would “use a multi-layered approach” to remove accounts held by users they suspect are underage.

In an email alert sent last week, Meta said it is “initially adopting the least privacy intrusive measure to understand age and will then seek additional information when it has reason to doubt the information provided.”

Kick told this masthead in early November it “intends to introduce a range of measures, which will be communicated to our community in due course”. A representative for Reddit said the platform did not have anything to share at this time, and representatives for Google and X did not respond.

Will my social media account be removed by exactly 12.01am on December 10?

A key word in the eSafety Commissioner’s regulatory guidelines is “from”.

The social media minimum age act is effective from December 10, which is when eSafety will be looking for evidence that accounts already identified as belonging to under-16s have been removed, and that age assurance methods have been implemented to prevent under-16s opening new accounts.

Loading

Platforms are expected by eSafety to have been monitoring common circumvention methods – increasing declared age to over 16, changing location settings – before December 10, and accounts with that type of activity to be flagged for review.

Meta has indicated that it is aiming to comply by December 10, confirming it will begin removing the Facebook, Instagram (at least 300,000 under-16s have been identified as users already) and Threads accounts of children aged 13 to 15 on December 4. New accounts for under-16s will also be blocked from December 4. Read more about how that will work here.

But some under-16s may wake up on December 10 and still be able to use age-restricted apps because their age has yet to be determined.

Where is the age verification information stored? Is my personal data secure?

Prime Minister Anthony Albanese has rejected claims the policy’s age assurance digital ID feature would steal users’ information.

“It is certainly not the case,” Albanese said on radio station Nova Sydney on November 10. “This is about giving people power back to families, and this is not about government. This is about people and looking after our youngest Australians.”

Platforms must comply with the Privacy Act, and eSafety strongly encourages them to use non-sensitive personal information as much as possible. If sensitive personal information – such as government IDs – is required, platforms are not expected to retain it.

Loading

One platform that already uses government IDs to verify accounts is LinkedIn, which does it through a third party. LinkedIn does not receive biometric data, photos, numbers, or expiry or issue dates associated with the documents. The third party says it generally only retains the personal data for as long as necessary to fulfil the verification.

Tinder does photo checks and verifications in-house, and says it deletes biometric data within 24 hours.

Discord, which is not an age-restricted platform under the social media ban but did roll out age assurance in Australia and the United Kingdom this year, says it deletes facial images and identification documents directly after ages are confirmed.

In October, however, hackers gained access to “a small number of government ID images” from users who had appealed an age determination through the platform’s manual review process with its trust and safety team.

Teenage users of Meta’s platforms last week started to receive a combination of in-app messages, notifications, SMS messages and emails. This is an example message from Meta.

The main risk to the security of personal data, according to an expert from VerifyMy, is dodgy third-party providers that have not signed up to the voluntary global standards for age assurance, or scammers posing as third-party age assurers. Read the full story here.

Will my account disappear completely and the content be deleted? Or will I get it back when I turn 16?

Meta has confirmed under-16s will be able to archive their existing Facebook, Instagram and Threads accounts so they can re-access them when they turn 16.

Last week, Meta started sending out a combination of in-app messages, notifications, SMS messages and emails to users they have identified as under-16, giving them two weeks notice before their profiles are removed. These users are encouraged to download any photos, videos or posts before they lose access to their accounts.

Loading

Snapchat also encourages under-16s to download their data “as soon as possible” and cancel paid subscriptions to services including Snapchat+ or Memories+. From December 10, their accounts will be locked for up to three years, or until the user turns 16 and chooses to reactivate their account.

TikTok Australia’s public policy lead for content and safety, Ella Woods-Joyce, said in a hearing that the platform will give users identified as under 16 “a choice”.

“We will deactivate, and that will give the user the ability to archive their content that they already have, but, if they prefer, they can delete their account,” Woods-Joyce said on October 28. If they choose to delete their account, the account information will be deleted.

Representatives for Google, Kick, Reddit, and X did not answer this masthead’s questions about whether accounts will be completely deleted, or deactivated with the possibility of restoration. Generally, you can download your account’s data on each platform through account settings.

What happens if I am mistakenly identified as under 16?

There is the possibility that social media users above the age of 16, or those who are not Australian residents but happen to be in Australia on December 10, could get caught up in the ban.

ESafety stipulates that users should have accessible options to dispute a platform’s age determination, and communications from the platforms throughout the appeals process should be clear and timely, and a “good practice” example in its regulatory guidelines includes the option to reactivate an account after a successful appeal.

How the reviews and reactivation process will actually play out has yet to be revealed by most platforms. Snapchat’s help page does not have information about its appeals process, but it’s believed the platform will have one. Kick, Reddit, and TikTok declined to answer this question when asked by this masthead. Google and X did not respond.

Loading

Meta revealed last week that users who are incorrectly classified as underage will have to verify their identity through either a “video selfie” or by supplying government-issued ID to Yoti. Users who have already changed their age to above 16 in their account settings will also have to verify their age.

Will I be punished if I circumvent the social media ban?

No – and neither will your parents.

The responsibility is on platforms to comply with the law, which means they are responsible for confirming a user’s age and ensuring they can’t have an account if they are under 16.

Non-compliance carries a maximum penalty of $49.5 million for the platform – perhaps that is why some are suggesting that application stores confirm a user’s age before they can download an application.

ESafety expects platforms to detect when a virtual private network (known as a VPN, it masks your IP address and therefore can make it appear as if you’re in another location) has been used, and detect if that user is in Australia. They are also encouraged to have reporting systems in place, so users can flag other accounts they suspect belong to under-16s.

Start the day with a summary of the day’s most important and interesting stories, analysis and insights. Sign up for our Morning Edition newsletter.