January 22, 2026 — 5:00am

Snapchat is failing to prevent under-age users from reclaiming their banned accounts, with families reporting that teenagers can access their original profiles without facing age-verification checks, a loophole one mother says triggered a mental health crisis for her teenage daughter.

There are mounting concerns that Snapchat’s enforcement of Australia’s world-first under-16 social media restrictions is failing vulnerable teenagers, as users complain it is stonewalling reports of under-age users.

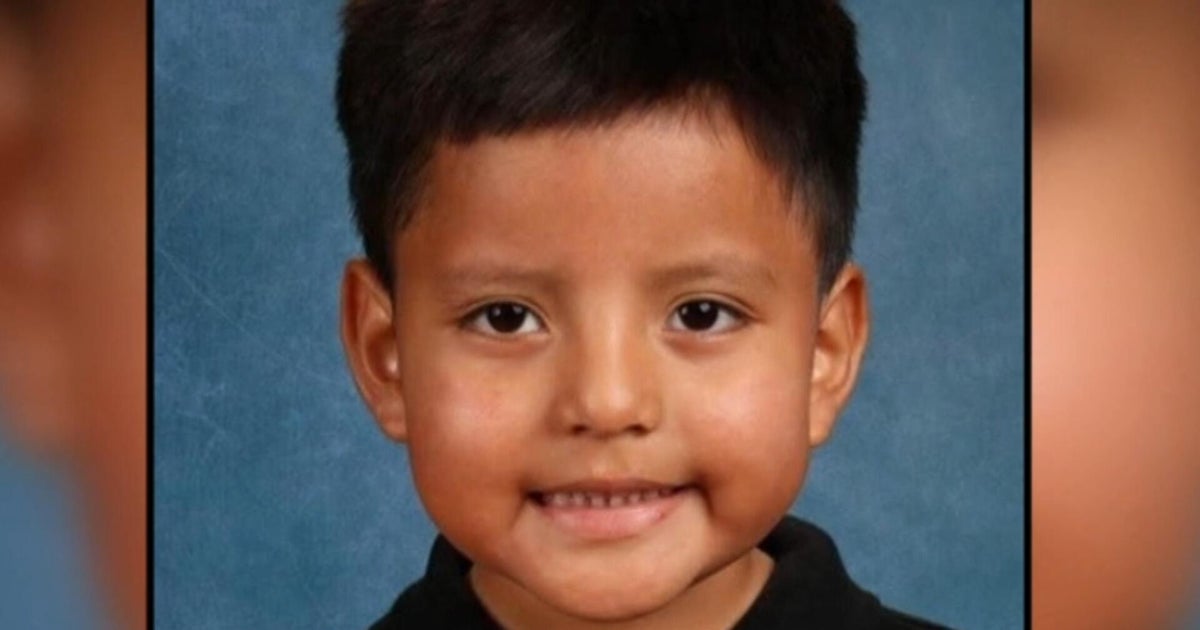

One 13-year-old girl from regional NSW was removed from Snapchat when Australia’s social media ban took effect on December 10. Her mental health “vastly improved,” her mother said.

Then, around 10 days ago, the teenager and her friends discovered they could get back on – not just with new accounts, but their original profiles. Snapchat was no longer asking them to verify their age. Within a week, the girl was self-harming again.

“We have put a ban in [at home], but we need the government social media ban to work,” the mother told this masthead.

When the mother, who spoke anonymously to protect her daughter’s privacy, reported her child’s friends who were also under-age, Snapchat directed her elsewhere: “If you are not the parent of the reported user, we recommend that you encourage their parent to contact us directly.”

That policy appears to be Snapchat’s own threshold, not a requirement under Australian law.

The Online Safety Amendment Act places the onus on platforms to take “reasonable steps” to prevent under-16s from holding accounts. It does not specify that only a biological parent can report, or that platforms can decline to act based on who makes the report. The eSafety Commissioner’s guidance requires platforms to “detect and deactivate or remove existing under-age accounts” regardless of how they come to the platform’s attention.

Snapchat said it could not verify what happened in this instance without a support ticket ID from the parent. It told this masthead it was “generally difficult to verify a parent/child relationship” and that it does not confirm outcomes to reporters “to protect account security, in the event that the reporter is not actually the user’s parent”.

A spokesman for Communications Minister Anika Wells said it was “deeply concerned by any report of young people experiencing harm online” and that all restricted platforms “must have robust, effective age-assurance systems in place to meet their legal responsibilities.”

“We don’t expect implementation to be perfect, but we expect progress and continuous improvement, and we will hold social media companies accountable,” the spokesman said.

A Snap spokeswoman said under-age accounts “cannot be reactivated without passing age verification” and that all users with declared ages under 16 “would have needed to face-scan or use ID to have their account unlocked.”

The company said it was “working to prevent under-age users from accessing Snapchat by not allowing under-18s to change their date of birth, by blocking new accounts on the same device, and by blocking users from setting up new accounts with different usernames.”

Accounts from multiple families suggest these measures are not working as intended.

The mother from NSW said she reported her daughter’s accounts to Snapchat repeatedly over three to four days before the platform removed them, but all of her daughter’s friends remain on the platform.

“If you try to report outside the app, it suggests that you report inside the app,” the mother said, effectively requiring parents to create their own account to flag their children.

The mother contrasted this with Instagram, where she said reporting an under-age account took “about 10 seconds” and asked for the child’s date of birth.

Before the ban, Snapchat had 440,000 Australian accounts held by 13- to 15-year-olds – more than any other restricted platform.

The mother said her family was not relying on the ban alone.

“We have a lockbox at night that all the phones go into, so we’re not passive,” she said. “The blocks on the other sites – like Facebook and TikTok – are working and are very helpful, but it’s different for different people. From talking to family, it’s very piecemeal at the moment, which makes it more stressful.”

Prime Minister Anthony Albanese last week announced 4.7 million accounts had been closed across the 10 restricted platforms. But the figure included inactive accounts, duplicates, and profiles already deleted but lingering in backend systems.

“Platforms will need to take continuous action to find under-age accounts that they may have missed, and to prevent circumvention, including the creation of new under-aged accounts,” eSafety Commissioner Julie Inman Grant said in a statement.

“This will involve a number of changes and improvements in technology which will take some time to replicate through the system.”

She said the regulator was aware some under-16s were still on social media, and is monitoring for systemic failures that may amount to a breach of the law.

In November, Snapchat said it “strongly disagrees” with being included in the ban.

“Disconnecting teens from their friends and family doesn’t make them safer – it may push them to less safe, less private messaging apps,” the company wrote.

Platforms face fines of up to $49.5 million for failing to take “reasonable steps”, though the eSafety Commissioner has given them an unspecified grace period.

If you or someone you know needs support, contact Lifeline on 13 11 14, Kids Helpline on 1800 551 800, or headspace on 1800 650 890.

The Business Briefing newsletter delivers major stories, exclusive coverage and expert opinion. Sign up to get it every weekday morning.

David Swan is the technology editor for The Age and The Sydney Morning Herald. He was previously technology editor for The Australian newspaper.Connect via Twitter or email.